What is a Sampler in Stable Diffusion?

Introduction to Stable Diffusion Samplers

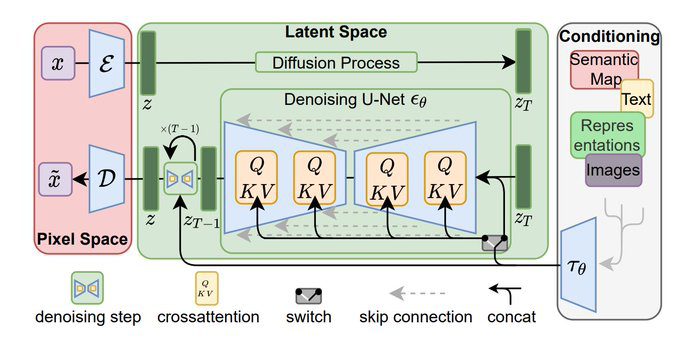

If you’re a casual user who just wants to create images easily and change the art styles, you can safely skip the samplers lesson. But if you’re a Stable Diffusion enthusiast that’s trying to squeeze higher quality and more control over your images, you’re definitely in the right place. Samplers are a part of the diffusion software that peel the curtain how the magic works. Let’s start with this clever diagram from Andrew Wong’s blog:

“To produce an image, Stable Diffusion first generates a completely random image in the latent space. The noise predictor then estimates the noise of the image. The predicted noise is subtracted from the image. This process is repeated a dozen times. In the end, you get a clean image.

This denoising process is called sampling because Stable Diffusion generates a new sample image in each step. The method used in sampling is called the sampler or sampling method.”

What’s the best sampler for Stable Diffusion?

It’s a touchy subject among weebs, perhaps it’s best if you experiment yourself. There are many comparison images and videos at the bottom of this page.

To see a list of samplers in PirateDiffusion, type this command:

/sampler

Here are some very opinioned reviews to arm you with a general sense of what each does:

Scheduler

Command

What each scheduler (sampler) does and why it’s popular

DPM ++ 2M

Karras*

/sampler:dpm2m /karras

The latest, and recommended for most users creating realistic images.

The DPM++ 2M sampler has an alternate operating mode called Karras that squeezes a little more detail out of this multi-step scheduler. The /karras parameter turns it on.

DPM++ 2M

/sampler:dpm2m

Same as above: DPM stands for Diffusion probabilistic model solver. DPM++ 2M is a popular choice for creators seeking the highest level of detail, and has a Karras mode (above).

UniPC

/sampler:upms

A great one all around. Compared to other samplers at 50 steps, UniPC can create a similar image in just about 25, so your images appear faster. This also has a /karras mode

DDIM

/sampler:ddim

K EULER ANCESTRAL

/sampler:k_euler_a

The unpredictable one. The _A at the end refers to “Ancestral Processing”, a which is a process of producing samples by first sampling variables which have no parents, then sampling child variables. Use this chaos sampling when all you want is more variety.

K EULER

/sampler:k_euler

The original K Euler is fast, but the results are simpler. It is perhaps comparable to DDIM, in many cases producing very similar results. This also has a /karras mode

HEUN

/sampler:heun

Heun is often regarded as an improvement on Euler because it is more accurate, so you’ll get more details but runs twice as slow. This also has a /karras mode

LCM**

/sampler:lcm

LCM is a special case — it can produce images with very low steps and guidance with slight tradeoffs for precision or quality, but is by far the fastest sampler around. To make it work, also add lower guidance and steps like this:

/guidance:1.5 /steps:6 /nofix

LCM stands for Latent Consistency Model and is built for fast inference, with as little as four to eight steps. We did a whole video about it, check it out

The /nofix in the prompt example above turns off the SDXL refiner, for brighter colors! It’s not required, but this combination may give you a more pleasing result.

How to compare samplers

Set a seed value and guidance to the exact same numbers, and allow at least 3-5 images. For example, here we are comparing DPM2M with and without the /karras variant using the model <sdxl> and the SDXL refiner at full crank, for full control. Right away, you can see the light is more natural with /karras enabled, and the image is a bit better lit.

Is the composition more pleasing as well? Subjective — The decision to interpret “beautiful” as long flowing hair is not something you’d expect a sampler to do, and that’s really the fun of toying with these enthusiast-class parameters. We offer a few more comparisons below.

/render /seed:123456 /guidance:7 /sampler:dpm2m a portrait of a beautiful woman #sdxlreal

/render /seed:123456 /guidance:7 /sampler:dpm2m /karras a portrait of a beautiful woman #sdxlreal

** VIDEO: HOW TO USE THE SPECIAL LCM SAMPLER

Deeper into the tech

To better understand samplers, you must first understand how the diffusion process works. Think about a blurry image, and how your eye gradually brings it into focus. Computers do the same with diffusion: the general information is stored as noise, literally colorful dots that don’t make sense to the human eye. Consider this image: A sampler can process that noise and “focus” it into a photo. The sampler comes into play at the denoising step (bottom left)

A sampler can process that noise and “focus” it into a photo. The sampler comes into play at the denoising step (bottom left)

In other words, Diffusion models learn how to remove noise from images, paired with a description of the image, as a way of learning what images look like, and then to generate new ones.

But how do you go from pure noise to an image *exactly*? There are many answers to this question, and thus, resulting in thousands of different samplers available online. Thousands!

In other words, Diffusion models learn how to remove noise from images, paired with a description of the image, as a way of learning what images look like, and then to generate new ones.

But how do you go from pure noise to an image *exactly*? There are many answers to this question, and thus, resulting in thousands of different samplers available online. Thousands!

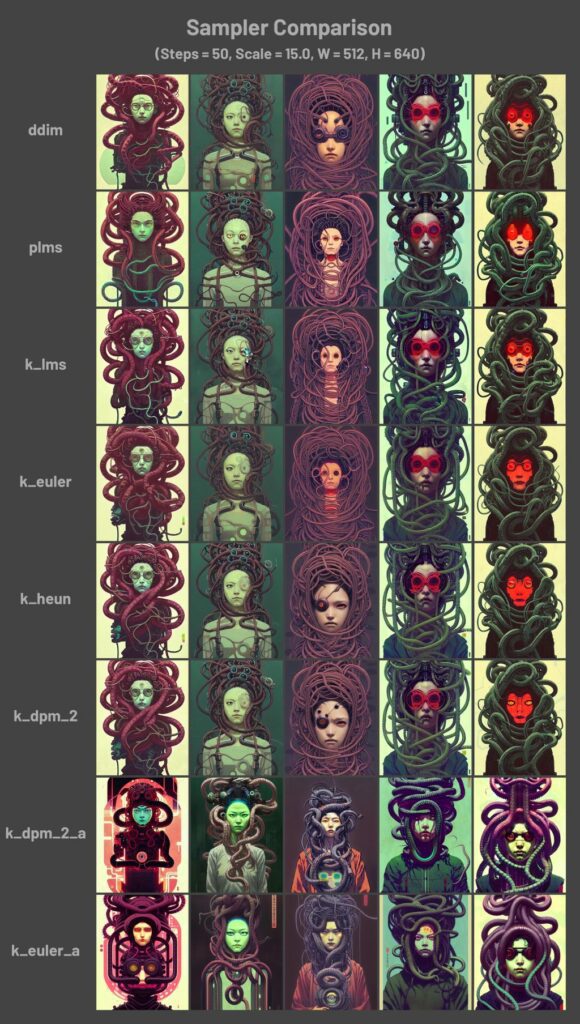

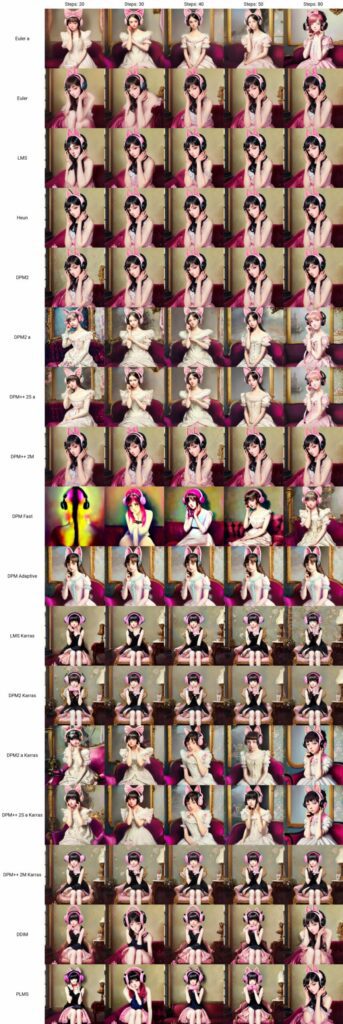

Comparisons

Can’t we just create a chart that compares each one? Remember – it varies greatly by prompt, so any chart we create would be inconclusive: What looks good in one prompt may look worse in a different prompt. The verdict is not out on that topic, and there is much debate about it online. Here are more comparisons with some unsupported or older ones. The big takeaway here is that you can save time and money by reducing the step count by working with a more “creative” sampler and get similar results.Pictured below: /steps:20 /steps:30 /steps:40

For this specific prompt — Some samplers can do gentler details than others, like hair, eyes, and so forth… but that same sampler may not be as good for a building. If you have the free time, it’s a super interesting toy to play with. If you are in a hurry, just pick the one you like and add more steps and you can get similar results.

There are tons of comparisons at higher resolutions on google if you want to become a samplers savant. People make really detailed charts!

For this specific prompt — Some samplers can do gentler details than others, like hair, eyes, and so forth… but that same sampler may not be as good for a building. If you have the free time, it’s a super interesting toy to play with. If you are in a hurry, just pick the one you like and add more steps and you can get similar results.

There are tons of comparisons at higher resolutions on google if you want to become a samplers savant. People make really detailed charts!

Go further: You can combine these with other techniques for a more striking image, such as SDXL /vass or one of our Upscalers

Go further: You can combine these with other techniques for a more striking image, such as SDXL /vass or one of our Upscalers