What is VASS and HDR color and composition correction?

VASS is an alternative color and composition for SDXL. To use it, add the the /vass parameter to your SDXL render.

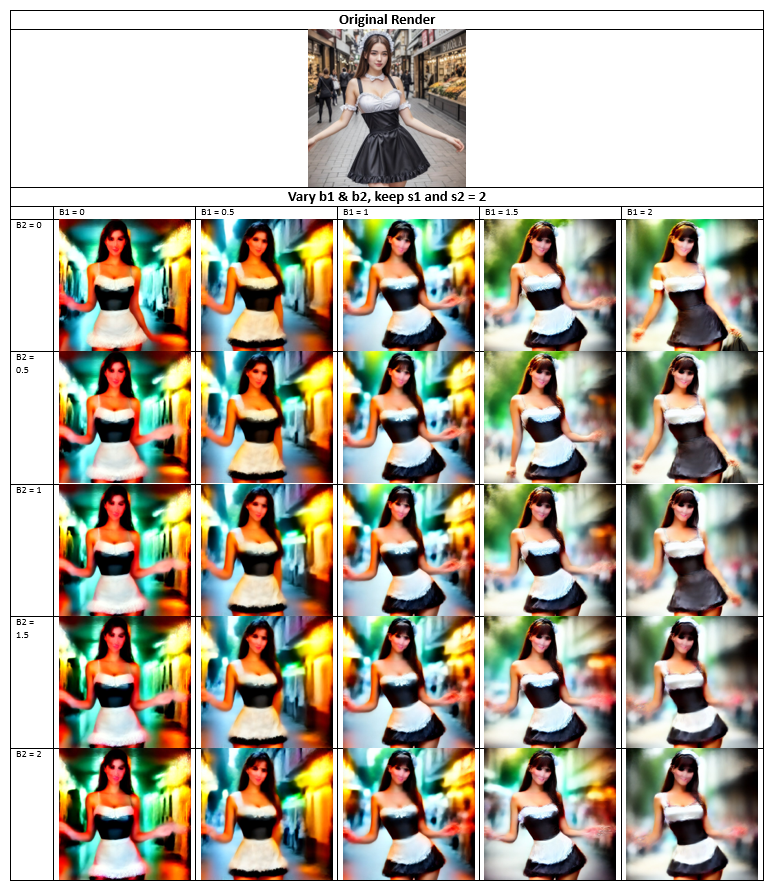

Whether or not it improves the image is highly subjective. If you prefer a more saturated image, VASS is probably not for you. If the image on the right is more pleasing, this HDR “correction” may help you achieve your goals.

The parameter VASS comes from the name of the creator. Timothy Alexis Vass is an independent researcher that has been exploring the SDXL latent space and has made some interesting observations. His aim is color correction, and improving the content of images. We have adapted his published code to run in PirateDiffusion.

Example of it in action:

As you can see in the figure above, more than the colors changed despite the same seed value. The squirrel turned towards the viewer and, unfortunately, so did the the cake. “Squirrel” is at the beginning of the prompt, so having more importance to the composition, the cake also shrank and additional cakes were removed. We would be lying if we said these are all definitely benefits or compositional corrections that happen when /vass is used, but rather its an example of how it can impact an image.

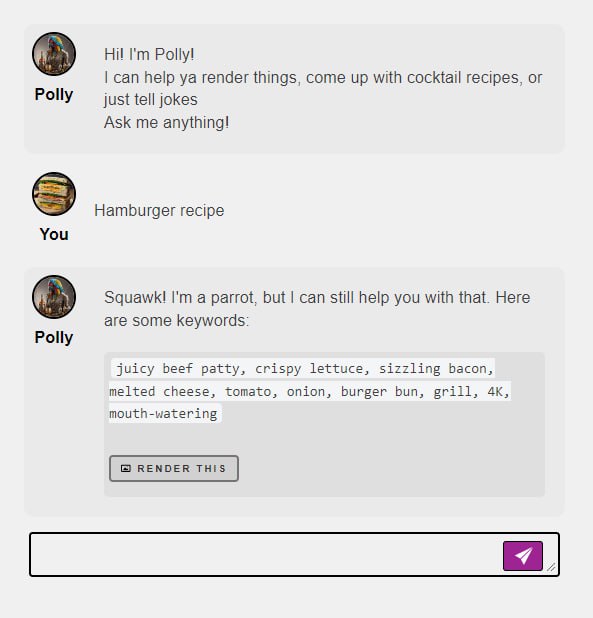

Try it for yourself:

/render a cool cat <sdxl> /vass

Limitations: This only works in SDXL

To compare with and without Vass, lock the seed, sampler, and guidance to the same values as shown in the diagram above. Otherwise, the randomness from these parameters will give you an entirely different image every time.

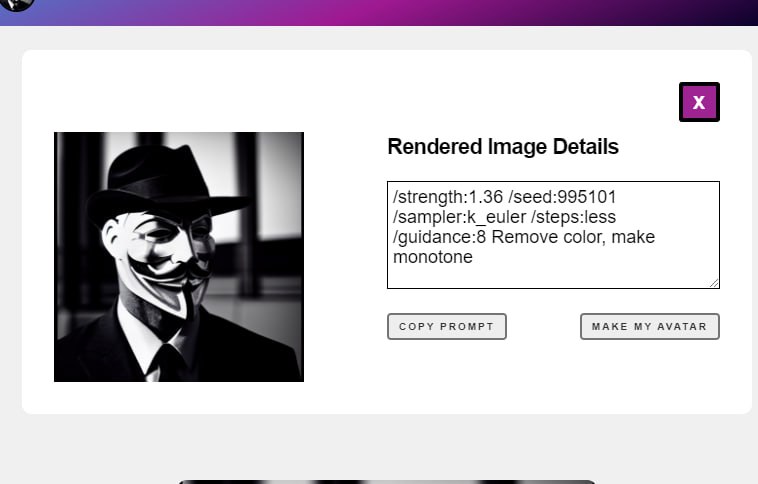

Tip: You can also disable the SDXL refiner with /nofix to take upscaling into your own hands for more control, like this:

/render a cool cat <sdxl> /vass /nofix

Why and when to use Vass

Try it on SDXL images that are too yellow, off-center, or the color range feels limited. You should see better vibrance and cleaned up backgrounds.

Also see: VAE runtime swap